| R. Kenny Jones1 Aalia Habib1 Rana Hanocka2 Daniel Ritchie1 |

| 1Brown University 2University of Chicago |

@article{jones2022NGSP,

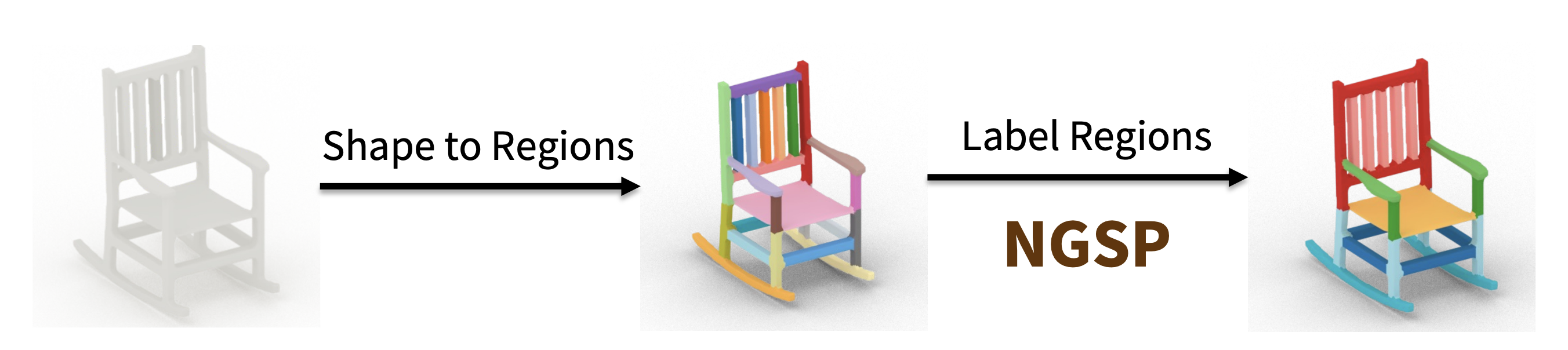

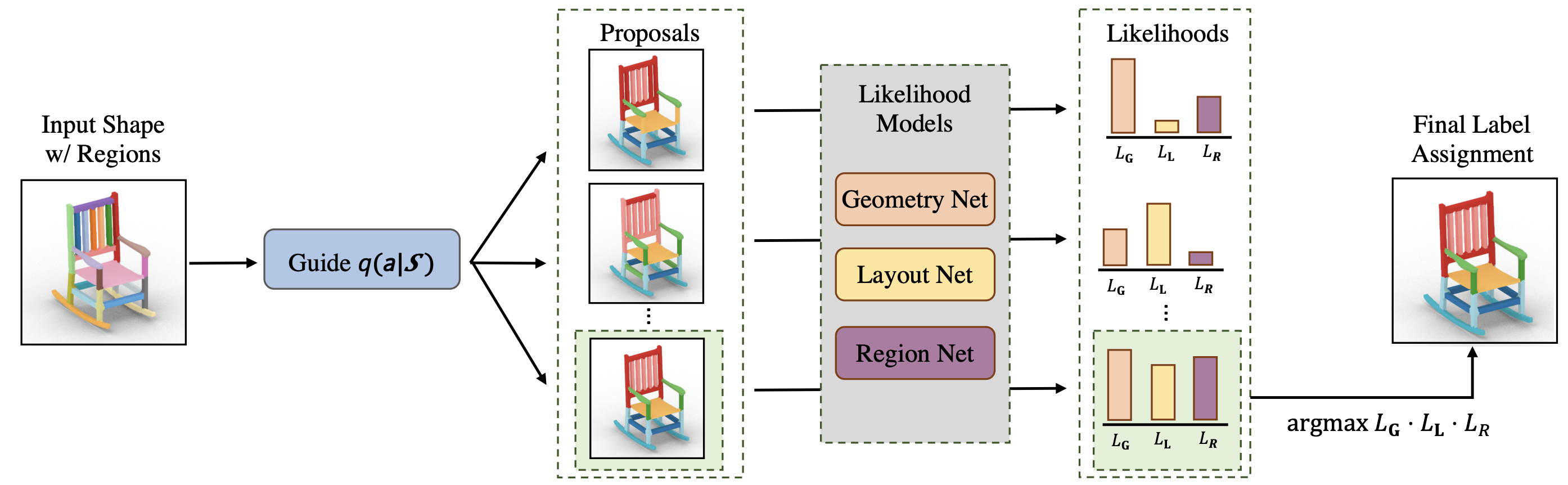

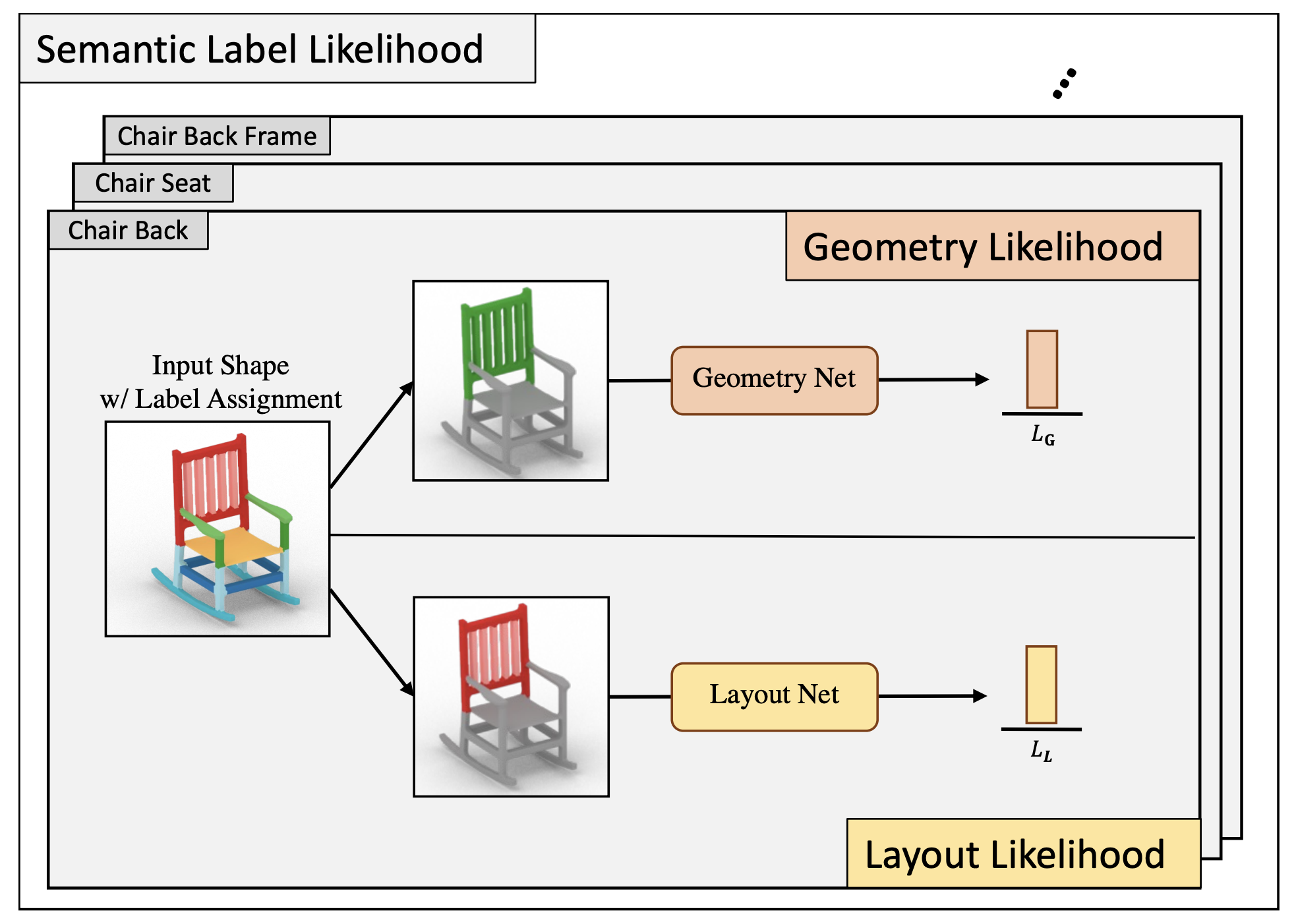

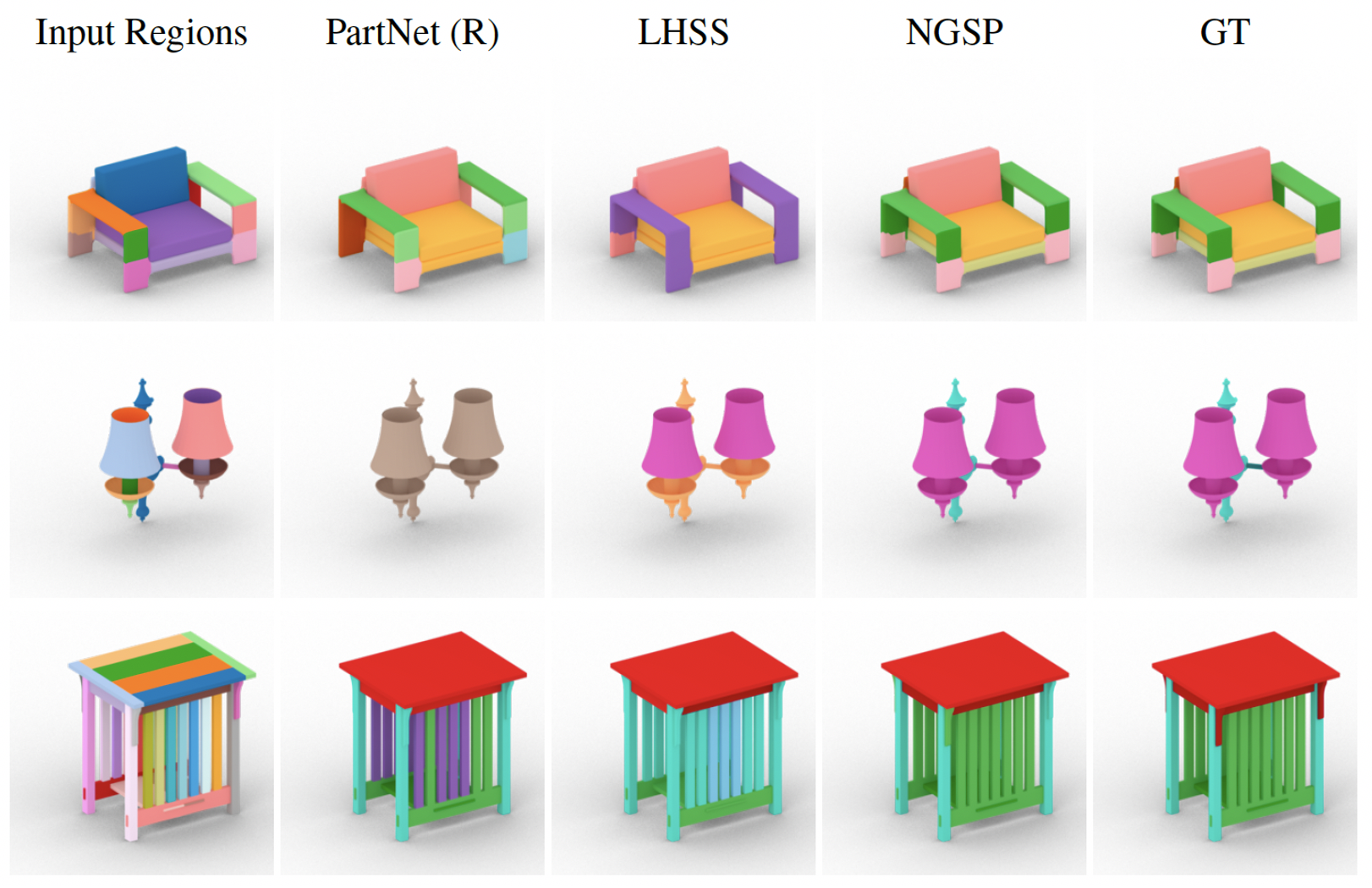

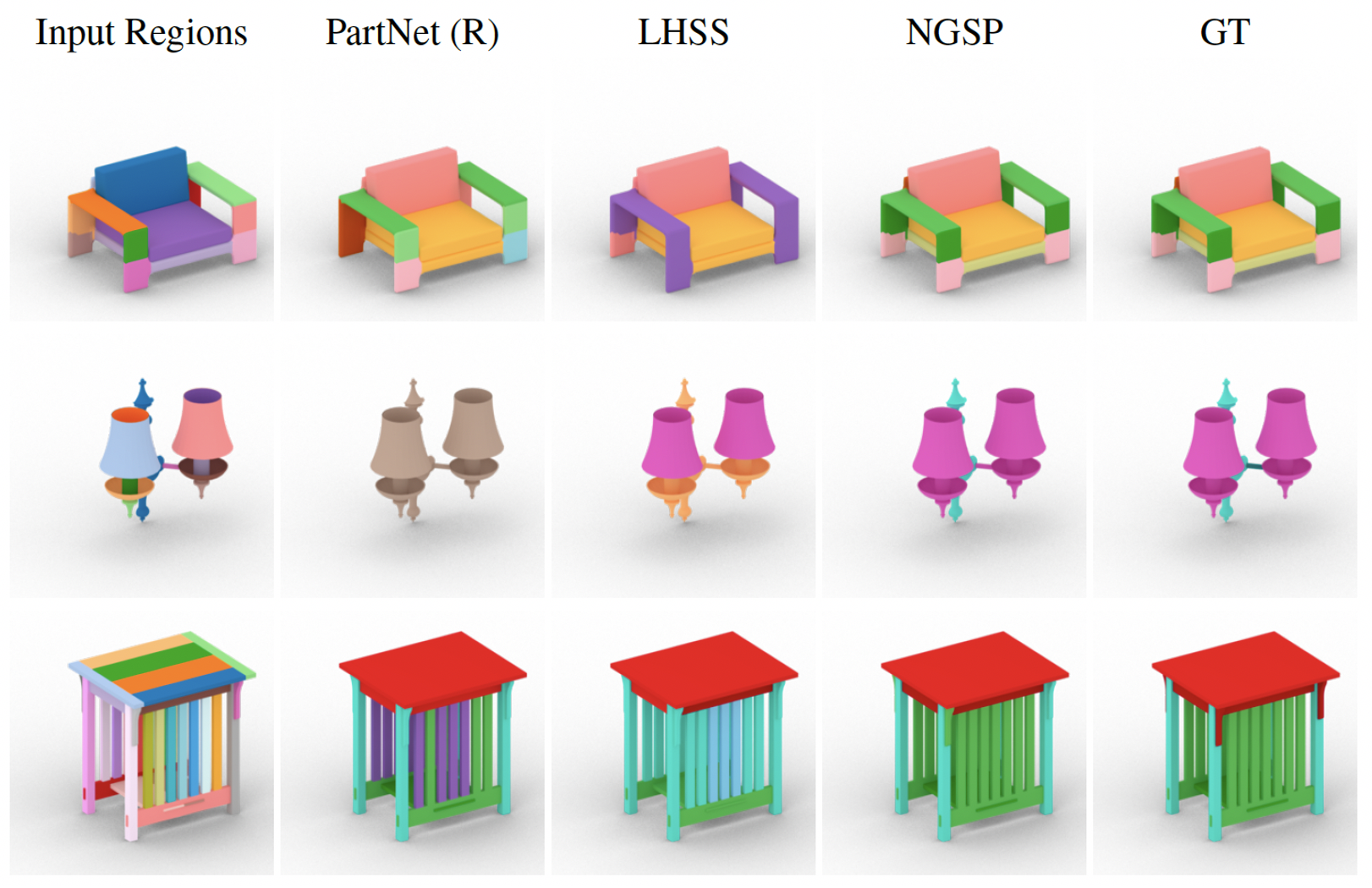

title={The Neurally-Guided Shape Parser: Grammar-based Labeling of 3D Shape Regions with Approximate Inference},

author={Jones, R. Kenny and Habib, Aalia and Hanocka, Rana and Ritchie, Daniel},

journal={The IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

year={2022}

}